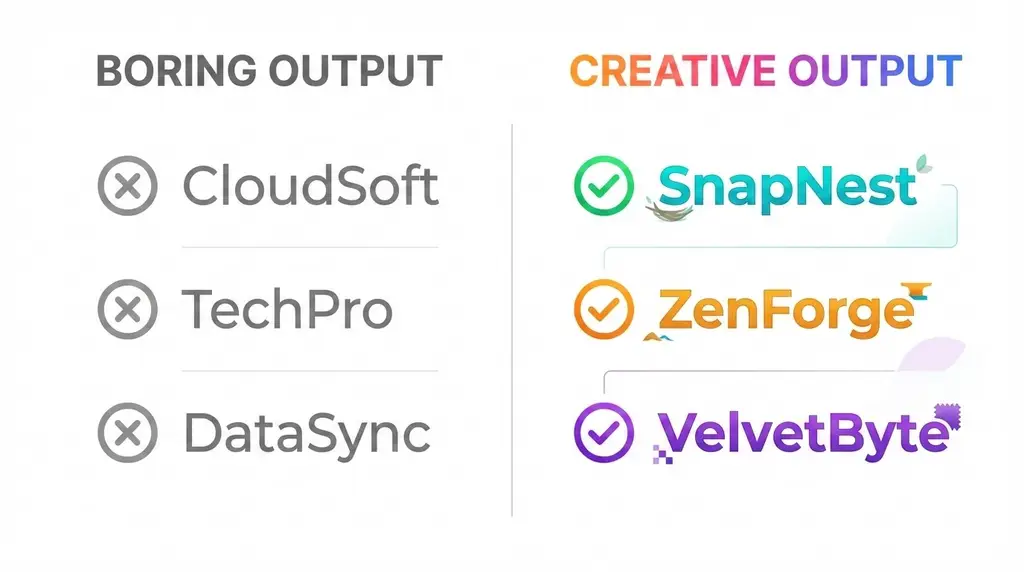

Most AI domain name generators produce the same predictable garbage. Type in "cloud storage" and you get CloudStore, StoreCloud, CloudBox, BoxCloud - an endless parade of keyword mashups that are already taken and wouldn't make good brand names anyway.

When we started building our naming tool, we had one non-negotiable goal: the AI had to brainstorm like a real creative team would. That meant accepting something uncomfortable - sometimes it would suggest names that weren't great. And that's exactly what we wanted.

The Creative Brainstorming Problem

Here's the dirty secret of AI naming tools: making an LLM produce reliable, predictable output is easy. Making it genuinely creative is hard.

Early in development, we could get the AI to spit out 50 perfectly safe, keyword-derived names in seconds. The problem? They were all boring. And boring names share a fundamental flaw - they're obvious, which means someone already registered them years ago.

According to Harvard Business Review research on LLMs and creativity, AI systems can genuinely enhance creative brainstorming through two pathways: persistence (generating hundreds of variations without fatigue) and flexibility (combining distant concepts in unexpected ways). But unlocking that flexibility requires deliberate prompt engineering.

Our early prompts were too constrained. We'd ask for "professional domain names for a cloud storage company" and get exactly that - professional, predictable, and useless.

The breakthrough came when we shifted our goal from "generate good names" to "brainstorm like humans would."

Why "Bad" Names Are Actually Good

Real brainstorming sessions produce terrible ideas. That's the point. When a creative team names a product, the whiteboard fills with puns that don't land, misspellings that confuse people, and concepts that seemed clever at 2 AM but look ridiculous in daylight.

The value isn't in each individual idea being good. It's in the volume and variety that eventually surfaces something great.

We rebuilt our prompts to encourage this natural creative messiness. The result? Our system now generates names across a genuine quality spectrum. Some score 95+/100. Some score 55. The scoring system (covered in our 5-minute brand audit guide) ranks everything so the cream rises to the top while the mediocre options sink to the bottom.

Users can still see lower-scoring names if they want to. Sometimes a name that scores 60 sparks an idea for something better. But they're not forced to wade through garbage pretending it's gold - the system is honest about what's strong and what's weak.

Iterating Across Every Industry

The tech camp I run in the UK teaches children about 3D printers, drones, and game development. That background taught me something crucial about building tools: you can't assume your first solution works everywhere.

Our initial prompts were tuned for SaaS products. They worked great for naming AI tools and developer platforms. But when we tested them on a Thai food truck in Florida? A gourmet cookie bakery in the UK? An Austrian drone photography service? The results were mediocre.

Different industries have different naming conventions. A fintech startup needs to signal trust and stability. A food truck needs personality and verbal clarity (people order by shouting over street noise). A drone photography business needs to sound professional without being corporate.

We spent weeks testing prompts across dozens of industry scenarios:

- Local businesses (food trucks, boutiques, service providers)

- SaaS startups (B2B tools, developer platforms, consumer apps)

- Creative services (photography, design, consulting)

- E-commerce (specialty retail, DTC brands)

Each industry taught us something. The prompts evolved to handle contextual requirements - understanding when verbal clarity matters more than sophistication, when a playful name works versus when it would destroy credibility.

The .com Availability Problem

Here's a prompt engineering challenge most people don't think about: .com domains are extremely scarce, and the AI doesn't know what's available.

When you ask an LLM to suggest domain names, it has no real-time knowledge of registration status. It'll happily recommend CloudNine.com (taken since 1997), Spark.io (taken), or any number of obvious names that were registered decades ago.

We couldn't just generate names and hope for the best. The prompts needed to push the AI toward names that might actually be available - which meant steering away from obvious patterns and toward creative, invented, or unexpected combinations.

This is where temperature settings became critical. Higher temperature values in LLMs increase randomness and creativity at the cost of coherence. Too low, and you get boring, predictable names. Too high, and you get gibberish.

We found a sweet spot that encouraged creativity while maintaining brandability. The AI suggests names that are just unusual enough to have availability odds, but not so bizarre that they'd confuse customers.

Making the AI Brutally Honest

Most AI tools are sycophants. Ask ChatGPT if your terrible business name is good, and it'll find something nice to say about it. That's useless for domain naming.

We engineered our prompts to be brutally honest. When the AI evaluates a name - whether it generated the name or the user suggested it - it tells the truth:

- "This name is confusingly similar to an existing brand in your space"

- "The spelling will cause problems - people will search for the wrong thing"

- "This sounds unprofessional for your target audience"

- "There's a trademark risk with this name you should investigate"

This honesty extends to the AI's own suggestions. The verdicts in our naming results don't sugarcoat weak candidates. A 60/100 name gets a verdict explaining why it's mediocre.

Building this required careful prompt engineering around evaluation criteria. The AI needed to understand our five brand metrics - Brand Fit, Verbal Clarity, Authority, SEO Potential, and Resale Value - and apply them consistently without bias toward its own creative output.

The Metrics We Considered (And Dropped)

During development, we experimented with additional scoring dimensions that seemed promising but ultimately didn't make the cut:

Domain age availability - Whether the domain had been previously registered. Interesting data, but not predictive of future success.

Social handle availability - Checking if matching Twitter/Instagram handles existed. Too volatile (handles get claimed and abandoned constantly) and less important than the domain itself.

Phonetic uniqueness - How distinct the name sounded from competitors. Theoretically useful, but overlapped too much with our Verbal Clarity metric.

International pronunciation - Cross-language clarity for global brands. Important for some users, but too specialized to weight equally for everyone.

Character efficiency - Ratio of meaningful characters to total length. Interesting academic metric, but didn't correlate with real-world brand success.

We dropped these because the five core metrics - Brand Fit & Clarity, Verbal Clarity, Authority, SEO Potential, and Resale Value - proved more universally applicable. They work whether you're naming an AI startup or a local bakery.

What Actually Worked

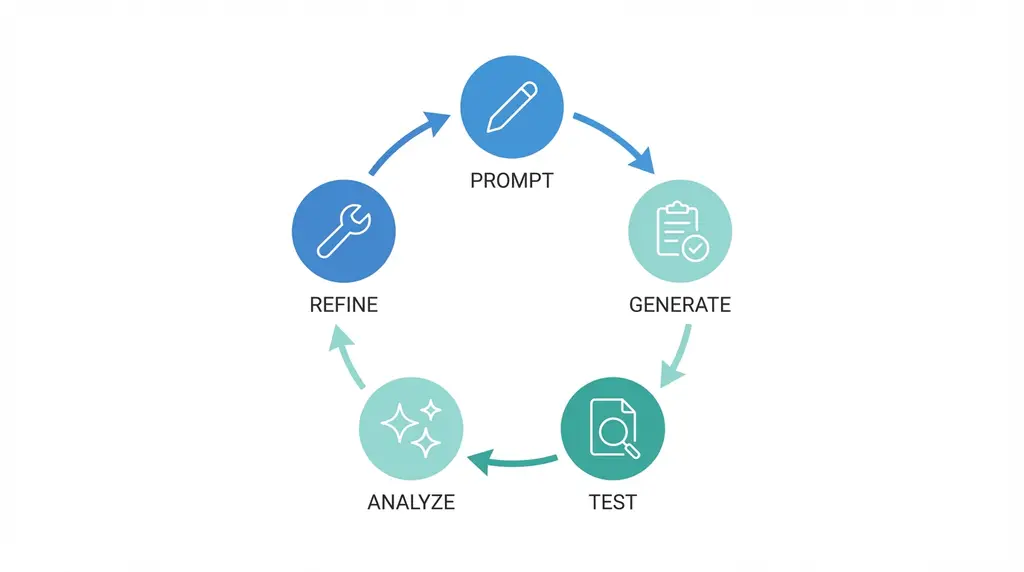

After months of iteration, here's what we learned about prompt engineering for creative naming:

1. Context depth matters more than prompt length Early prompts were verbose. Later prompts were focused. The AI needs enough context to understand the business, industry, and naming constraints - but not so much that it gets confused or constrained.

2. Examples shape output more than instructions Telling the AI to "be creative" is useless. Showing it examples of creative names - and explaining why they work - produced dramatically better results.

3. Negative constraints are as important as positive ones "Don't suggest keyword mashups" and "avoid generic suffixes like -ify and -ly" were as valuable as "suggest brandable, memorable names."

4. Temperature tuning is task-specific Brainstorming benefits from higher creativity settings. Evaluation and scoring require lower temperature for consistency. We run different temperature configurations for different parts of the workflow.

5. Human judgment stays essential No matter how good the prompts get, AI naming is a decision-support tool, not a replacement for human judgment. IBM's prompt engineering guide emphasizes this: the best AI systems augment human decision-making rather than replacing it.

From Naming Tool to Naming Ourselves

Building URLGenie with these principles led to an obvious test case: use our own tool to name itself.

We ran the prompt engineering system we'd built, evaluated the output with the scoring framework we'd developed, and picked the top candidate. URLGenie.ai scored 98/100 and passed every risk check.

That story is covered in detail in our naming case study. But the point here is simpler: the prompt engineering that powers our tool isn't theoretical. We bet our brand on it working.

The next time you're stuck naming something, remember this: the goal isn't perfection from the AI. It's volume, variety, and honest evaluation. Let the AI brainstorm messily. Let it suggest some duds alongside the gems. Then use data - not gut feeling - to separate the winners from the losers.

That's the prompt engineering philosophy behind URLGenie. And based on the names coming out of the system, it's working.